Terraform

Terraform is a tool for building, changing, and versioning infrastructure safely and efficiently. It was created by HashiCorp and first released in 2014. Terraform can manage existing and popular service providers as well as custom in-house solutions. It is a popular tool in DevOps.

Contents

Introduction

- Infrastructure as Code

- Used for the automation of your infrastructure

- It keeps your infrastructure in a certain state (compliant)

- E.g., 2 web instances and 2 volumes and 1 load balancer

- It makes your infrastructure auditable

- That is, you can keep your infrastructure change history in a version control system (e.g., git)

A high-level difference and/or reason to use Terraform over CAPS (Chef, Ansible, Puppet, Salt) is that these others have a focus on automating the installation and configuration of software (i.e., keeping the machines in compliance and in a certain state). Terraform, however, can automate the provisioning of the infrastructure itself (e.g., in AWS or GCP). One can, of course, do the same with, say, Ansible. However, Terraform really shines in infrastructure management and automation.

Terraform cheatsheet

- General commands

- Get Terraform version:

$ terraform version

- Download and update root modules:

$ terraform get -update=true

- Open up a Terraform interactive command:

$ terraform console

- Create a DOT diagram of Terraform dependencies:

$ terraform graph | dot -Tpng > graph.png

- Format Terraform code to HCL standards:

$ terraform fmt

- Validate Terraform code syntax:

$ terraform validate

- Enable tab auto-completion in the terminal

$ terraform -install-autocomplete

- Show information about provider requirements:

$ terraform providers

- Login and logout of Terraform Cloud:

$ terraform login $ terraform logout

- Workspaces

- List the available workspaces

$ terraform workspace list

- Create a new workspace:

$ terraform workspace new development

- Select an existing workspace:

$ terraform workspace select default

- Initialize Terraform

- Initialize Terraform in the current working directory:

$ terraform init

- Skip plugin installation:

$ terraform init -get-plugins=false

- Force plugin installation from a directory:

$ terraform init -plugin-dir=PATH

- Upgrade modules and plugins at initialization:

$ terraform init -upgrade

- Update backend configuration:

$ terraform init -migrate-state -force-copy

- Skip backend configuration:

$ terraform init -backend=false

- Use a local backend configuration:

$ terraform init -backend-config=FILE

- Change state lock timeout (default is zero seconds):

$ terraform init -lock-timeout=120s

- Plan Terraform

- Produce a plan with difference between code and state:

$ terraform plan

- Output a plan file for reference during apply:

$ terraform plan -out current.tfplan

- Output a plan to show effect of Terraform destroy:

$ terraform plan -destroy

- Target a specific resource for deploying:

$ terraform plan -target=ADDRESS

Note that the -target option is also available for the terraform apply and terraform destroy commands.

- Outputs

- List available outputs:

$ terraform output

- Output a specific value:

$ terraform output NAME

- Apply Terraform

- Apply the current statue of Terraform code:

$ terraform apply

- Specify a previously generated plan to apply:

$ terraform apply current.tfplan

- Enable auto-approval or automation:

$ terraform apply -auto-approve

- Destroy Terraform

- Destroy resources managed by Terraform state:

$ terraform destroy

- Enable auto-approval or automation:

$ terraform destroy -auto-approve

- Manage Terraform State

- List all resources in Terraform state:

$ terraform state list

- Show details about a specific resource:

$ terraform state show ADDRESS

- Track an existing resource in state under a new name:

$ terraform state mv SOURCE DESTINATION

- Import a manually-created resource into state:

$ terraform state import ADDRESS ID

- Pull state and save to a local file:

$ terraform state pull > terraform.tfstate

- Push state to a remote location:

$ terraform state push PATH

- Replace a resource provider:

$ terraform state replace-provider A B

- Taint a resource to force redeployment on apply:

$ terraform taint ADDRESS

- Untaint a previously tainted resource:

$ terraform untaint ADDRESS

Examples

Hello, World

This section contains the simplest possible Terraform module—one that just outputs "Hello, World"—to demonstrate how you can use Terratest to write automated tests for your Terraform code.

Note that this module does not do anything useful; it is just here to demonstrate the simplest usage pattern for Terratest. For a slightly more complicated example of a Terraform module and the corresponding tests, see terraform-basic-example.

$ cat << EOF > main.tf

terraform {

# This module is now only being tested with Terraform 0.13.x. However, to make upgrading easier, we are setting

# 0.12.26 as the minimum version, as that version added support for required_providers with source URLs, making it

# forwards compatible with 0.13.x code.

required_version = ">= 0.12.26"

}

# website::tag::1:: The simplest possible Terraform module: it just outputs "Hello, World!"

output "hello_world" {

value = "Hello, Redapt!"

}

EOF

- Run this module manually and locally with:

$ terraform init $ terraform apply

- When you are done, run:

$ terraform destroy

Basic example #1

The following is a super simple example of how to use Terraform to spin up a single AWS EC2 instance.

- Create a working directory for your Terraform project:

$ mkdir ~/dev/terraform

- Create a Terraform file describing the AWS EC2 instance to create:

$ cat << EOF > instance.tf

provider "aws" {

access_key = "<REDACTED>"

secret_key = "<REDACTED>"

region = "us-west-2"

}

resource "aws_instance" "xtof-terraform" {

ami = "ami-a042f4d8" # CentOS 7.4

instance_type = "t2.micro"

}

EOF

- Initialize your Terraform working directory:

$ terraform init

- Create your EC2 instance:

$ terraform plan $ terraform apply

Note: A better method to use is:

$ terraform plan -out myinstance.terraform $ terraform apply myinstance.terraform

By using the two separate above commands, Terraform will first show you what changes it will make without doing the actual changes. The second command will ensure that only the changes you saw on screen are applied. If you would just use terraform apply, more changes could have been added, because the remote infrastructure can change or files could have been edited (e.g., by someone else on your team). In short, always use the plan/apply file method.

- Destroy the above instance:

$ terraform destroy

Basic example #2

The following expounds upon what we did in "Basic example #1", except we are building a more "Best Practices" approach. We will continue to build these examples.

- Create a working directory (

aws.create_ec2_instance) with the following files:

aws.create_ec2_instance/ ├── .gitignore ├── instance.tf ├── provider.tf ├── terraform.tfvars └── vars.tf

$ cat << EOF > .gitignore # Compiled files *.tfstate *.tfstate.backup # Variables files with secrets *.tfvars # Plan files *.plan # Certificate files *.pem *.pfx *.crt *.key EOF

The contents of each of the above files should look like the following:

$ cat << EOF > instance.tf

resource "aws_instance" "example" {

ami = "${lookup(var.AMIS, var.AWS_REGION)}"

instance_type = "t2.micro"

}

EOF

$ cat << EOF > provider.tf

provider "aws" {

access_key = "${var.AWS_ACCESS_KEY}"

secret_key = "${var.AWS_SECRET_KEY}"

region = "${var.AWS_REGION}"

}

EOF

$ cat << EOF > terraform.tfvars

AWS_ACCESS_KEY = "<REDACTED>"

AWS_SECRET_KEY = "<REDACTED>"

EOF

$ cat << EOF > vars.tf

variable "AWS_ACCESS_KEY" {}

variable "AWS_SECRET_KEY" {}

variable "AWS_REGION" {

default = "us-west-2"

}

variable "AMIS" {

type = "map"

default = {

us-west-2 = "ami-b2d463d2"

us-east-1 = "ami-13be557e"

eu-west-1 = "ami-0d729a60"

}

}

EOF

- Initialize the Terraform working directory:

$ terraform init

- Now, "plan" your execution with:

$ terraform plan -out myinstance.plan

...

+ aws_instance.example

ami: "ami-b2d463d2"

associate_public_ip_address: "<computed>"

availability_zone: "<computed>"

ebs_block_device.#: "<computed>"

ephemeral_block_device.#: "<computed>"

instance_state: "<computed>"

instance_type: "t2.micro"

key_name: "<computed>"

network_interface_id: "<computed>"

placement_group: "<computed>"

private_dns: "<computed>"

private_ip: "<computed>"

public_dns: "<computed>"

public_ip: "<computed>"

root_block_device.#: "<computed>"

security_groups.#: "<computed>"

source_dest_check: "true"

subnet_id: "<computed>"

tenancy: "<computed>"

vpc_security_group_ids.#: "<computed>"

Plan: 1 to add, 0 to change, 0 to destroy.

- Now, "apply" (or actually create the EC2 instance):

$ terraform apply myinstance.plan

Basic example #3

- Pull down a Docker image

This example will create a very simple Terraform file that will pull down an image (ghost) from Docker Hub.

- Set up the environment:

$ mkdir -p terraform/ghost && cd terraform/ghost

- Create a Terraform script:

$ cat << EOF > main.tf

# Download the latest Ghost image

resource "docker_image" "image_id" {

name = "ghost:latest"

}

EOF

- Initialize Terraform:

$ terraform init

- Validate the Terraform file:

$ terraform validate

- List providers in the folder:

ls .terraform/plugins/linux_amd64/

- List providers used in the configuration:

$ terraform providers . └── provider.docker

- Terraform Plan:

$ terraform plan -out=project.plan

- Terraform Apply:

$ terraform apply "project.plan"

- List Docker ghost image:

$ docker image ls | grep ^ghost ghost latest ebaf3206b9da 5 days ago 380MB

- Terraform Show:

$ terraform show docker_image.image_id: id = sha256:ebaf3206b9da09b0999b9d2db7c84bb6f78586b7b9f8595d046b7eca571a07f5ghost:latest latest = sha256:ebaf3206b9da09b0999b9d2db7c84bb6f78586b7b9f8595d046b7eca571a07f5 name = ghost:latest

- Destroy Terraform project (i.e., do the reverse of the above):

$ terraform destroy

- Verify Docker image has been removed:

$ docker image ls | grep ^ghost $ terraform show

Both of the above commands should return nothing.

- Deploy a Docker container

In this section, we will expand upon what we did above (pull down a Docker image) by creating a container.

- Start up a container running the Ghost Blog:

$ cat << EOF > main.tf

# Download the latest Ghost image

resource "docker_image" "image_id" {

name = "ghost:latest"

}

# Start the Container

resource "docker_container" "container_id" {

name = "ghost_blog"

image = "${docker_image.image_id.latest}"

ports {

internal = "2368"

external = "80"

}

}

EOF

$ terraform validate

$ terraform plan -out=project.plan

$ terraform apply

- Verify that the Ghost blog is running:

$ $ docker container ls

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

babe7db87d51 ebaf3206b9da "docker-entrypoint.s…" 7 seconds ago Up 4 seconds 0.0.0.0:80->2368/tcp ghost_blog

$ curl -I localhost

HTTP/1.1 200 OK

X-Powered-By: Express

Cache-Control: public, max-age=0

Content-Type: text/html; charset=utf-8

Content-Length: 21694

ETag: W/"54be-JQLstl8ocjMgh3/fswe5SP78jTg"

Vary: Accept-Encoding

Date: Wed, 18 Sep 2019 22:03:04 GMT

Connection: keep-alive

$ curl -s localhost | grep -E "<title>"

<title>Ghost</title>

- Cleanup:

$ terraform destroy

Basics

Tainting and Untainting Resources

- Tainting Resources

taint- Manually mark a resource for recreation

untaint- Manually unmark a resource as tainted

- Tainting a resource:

$ terraform taint [NAME]

- Untainting a resource:

$ terraform untaint [NAME]

- Set up the environment:

$ cd terraform/basics

- Redeploy the Ghost image:

$ terraform apply

- Taint the Ghost blog resource:

$ terraform taint docker_container.container_id

- See what will be changed:

$ terraform plan

- Remove the taint on the Ghost blog resource:

$ terraform untaint docker_container.container_id

- Verify that the Ghost blog resource is untainted:

$ terraform plan

- Updating Resources

- Edit

main.tfand change the image toghost:alpine:

# Download the latest Ghost image

resource "docker_image" "image_id" {

name = "ghost:alpine"

}

# Start the Container

resource "docker_container" "container_id" {

name = "ghost_blog"

image = "${docker_image.image_id.latest}"

ports {

internal = "2368"

external = "80"

}

}

- Validate changes made to

main.tf:

$ terraform validate

- See what changes will be applied:

$ terraform plan

- Apply image changes:

$ terraform apply

- List the Docker containers:

$ docker container ls

- See what image Ghost is using:

$ docker image ls | grep [IMAGE]

- Check again to see what changes will be applied:

$ terraform plan

- Apply container changes:

$ terraform apply

- See what image Ghost is now using:

$ docker image ls | grep [IMAGE]

- Cleaning up the environment

- Reset the environment:

$ terraform destroy

Confirm the destroy by typing yes.

- List the Docker images:

$ docker image ls

- Remove the Ghost blog image:

$ docker image rm ghost:latest

- Reset

main.tf:

# Download the latest Ghost image

resource "docker_image" "image_id" {

name = "ghost:latest"

}

# Start the Container

resource "docker_container" "container_id" {

name = "ghost_blog"

image = "${docker_image.image_id.latest}"

ports {

internal = "2368"

external = "80"

}

}

Console and Output

- Working with the Terraform console

- Redeploy the Ghost image and container:

$ terraform apply

- Show the Terraform resources:

$ terraform show

- Start the Terraform console:

$ terraform console

- Type the following in the console to get the container's name:

docker_container.container_id.name

- Type the following in the console to get the container's IP:

docker_container.container_id.ip_address

Break out of the Terraform console by using Ctrl+C.

- Destroy the environment

$ terraform destroy

- Output the name and IP of the Ghost blog container

- Edit main.tf:

# Download the latest Ghost Image

resource "docker_image" "image_id" {

name = "ghost:latest"

}

# Start the Container

resource "docker_container" "container_id" {

name = "blog"

image = "${docker_image.image_id.latest}"

ports {

internal = "2368"

external = "80"

}

}

# Output the IP Address of the Container

output "ip_address" {

value = "${docker_container.container_id.ip_address}"

description = "The IP for the container."

}

# Output the Name of the Container

output "container_name" {

value = "${docker_container.container_id.name}"

description = "The name of the container."

}

- Validate changes:

$ terraform validate

- Apply changes to get output:

$ terraform apply

- Cleaning up the environment

- Reset the environment:

$ terraform destroy

Input variables

Input variables serve as parameters for a Terraform file. A variable block configures a single input variable for a Terraform module. Each block declares a single variable.

- Syntax:

variable [NAME] {

[OPTION] = "[VALUE]"

}

- Arguments

Within the block body (between { }) is the configuration for the variable, which accepts the following arguments:

type(Optional)- If set, this defines the type of the variable. Valid values are string, list, and map.

default(Optional)- This sets a default value for the variable. If no default is provided, Terraform will raise an error if a value is not provided by the caller.

description(Optional)- A human-friendly description for the variable.

- Using variables during an apply:

$ terraform apply -var 'foo=bar'

- Set up the environment by editing

main.tf:

# Define variables

variable "image_name" {

description = "Image for container."

default = "ghost:latest"

}

variable "container_name" {

description = "Name of the container."

default = "blog"

}

variable "int_port" {

description = "Internal port for container."

default = "2368"

}

variable "ext_port" {

description = "External port for container."

default = "80"

}

# Download the latest Ghost Image

resource "docker_image" "image_id" {

name = "${var.image_name}"

}

# Start the Container

resource "docker_container" "container_id" {

name = "${var.container_name}"

image = "${docker_image.image_id.latest}"

ports {

internal = "${var.int_port}"

external = "${var.ext_port}"

}

}

# Output the IP Address of the Container

output "ip_address" {

value = "${docker_container.container_id.ip_address}"

description = "The IP for the container."

}

output "container_name" {

value = "${docker_container.container_id.name}"

description = "The name of the container."

}

- Validate the changes:

$ terraform validate

- Plan the changes:

$ terraform plan

- Apply the changes using a variable:

$ terraform apply -var 'ext_port=8080'

- Change the container name:

$ terraform apply -var 'container_name=ghost_blog' -var 'ext_port=8080'

- Reset the environment:

$ terraform destroy -var 'ext_port=8080'

Maps and Lookups

In this section, we will create a map to specify different environment variables based on conditions. This will allow us to dynamically deploy infrastructure configurations based on information we pass to the deployment.

- Set up the environment:

$ cd terraform/basics

$ cat << EOF > variables.tf

variable "env" {

description = "env: dev or prod"

}

variable "image_name" {

type = "map"

description = "Image for container."

default = {

dev = "ghost:latest"

prod = "ghost:alpine"

}

}

variable "container_name" {

type = "map"

description = "Name of the container."

default = {

dev = "blog_dev"

prod = "blog_prod"

}

}

variable "int_port" {

description = "Internal port for container."

default = "2368"

}

variable "ext_port" {

type = "map"

description = "External port for container."

default = {

dev = "8081"

prod = "80"

}

}

EOF

$ cat << EOF > main.tf

# Download the latest Ghost Image

resource "docker_image" "image_id" {

name = "${lookup(var.image_name, var.env)}"

}

# Start the Container

resource "docker_container" "container_id" {

name = "${lookup(var.container_name, var.env)}"

image = "${docker_image.image_id.latest}"

ports {

internal = "${var.int_port}"

external = "${lookup(var.ext_port, var.env)}"

}

}

EOF

- Plan the dev/prod deploy and apply:

$ terraform validate $ terraform plan -out=tfdev_plan -var env=dev $ terraform apply tfdev_plan $ terraform plan -out=tfprod_plan -var env=prod $ terraform apply tfprod_plan

- Destroy prod deployment:

$ terraform destroy -var env=prod -auto-approve

- Use Terraform console with environment variables:

$ export TF_VAR_env=prod $ terraform console

- Execute a lookup:

lookup(var.ext_port, var.env)

- Exit the console:

$ unset TF_VAR_env

Terraform Workspaces

In this section, we will see how workspaces can help us deploy multiple environments. By using workspaces, we can deploy multiple environments simultaneously without the state files colliding.

- Terraform commands:

- workspace

- new, list, select, and delete Terraform workspaces

- Workspace subcommands:

- delete

- Delete a workspace

- list

- List Workspaces

- new

- Create a new workspace

- select

- Select a workspace

- show

- Show the name of the current workspace

- Setup the environment:

$ cd terraform/basics

- Create a dev workspace:

$ terraform workspace new dev

- Plan the dev deployment:

$ terraform plan -out=tfdev_plan -var env=dev

- Apply the dev deployment:

$ terraform apply tfdev_plan

- Change workspaces:

$ terraform workspace new prod

- Plan the prod deployment:

$ terraform plan -out=tfprod_plan -var env=prod

- Apply the prod deployment:

$ terraform apply tfprod_plan

- Select the default workspace:

$ terraform workspace select default

- Find what workspace we are using:

$ terraform workspace show

- Select the dev workspace:

$ terraform workspace select dev

- Destroy the dev deployment:

$ terraform destroy -var env=dev

- Select the prod workspace:

$ terraform workspace select prod

- Destroy the prod deployment:

$ terraform destroy -var env=prod

Null Resources and Local-exec

In this section, we will utilize a Null Resource in order to perform local commands on our machine without having to deploy extra resources.

- Setup the environment:

$ cd terraform/basics

$ cat << EOF > main.tf

# Download the latest Ghost Image

resource "docker_image" "image_id" {

name = "${lookup(var.image_name, var.env)}"

}

# Start the Container

resource "docker_container" "container_id" {

name = "${lookup(var.container_name, var.env)}"

image = "${docker_image.image_id.latest}"

ports {

internal = "${var.int_port}"

external = "${lookup(var.ext_port, var.env)}"

}

}

resource "null_resource" "null_id" {

provisioner "local-exec" {

command = "echo ${docker_container.container_id.name}:${docker_container.container_id.ip_address} >> container.txt"

}

}

EOF

- Reinitialize, validate, plan, and apply:

$ terraform init $ terraform validate $ terraform plan -out=tfplan -var env=dev $ terraform apply tfplan

- View the contents of container.txt:

$ cat container.txt

- Destroy the deployment:

$ terraform destroy -auto-approve -var env=dev

Terraform Modules

This section will show how to use modules in Terraform.

- Set up the environment:

$ mkdir -p modules/{image,container}

$ touch modules/image/{main.tf,variables.tf,outputs.tf}

$ touch modules/container/{main.tf,variables.tf,outputs.tf}

- The Image Module

In this section, we will create our first Terraform module.

- Go to the image directory:

$ cd ~/terraform/basics/modules/image

$ cat << EOF > main.tf

# Download the Image

resource "docker_image" "image_id" {

name = "${var.image_name}"

}

EOF

$ cat << EOF > variables.tf

variable "image_name" {

description = "Name of the image"

}

EOF

$ cat << EOF > outputs.tf

output "image_out" {

value = "${docker_image.image_id.latest}"

}

EOF

- Initialize, validate, plan, and apply:

$ terraform init $ terraform validate $ terraform plan -out=tfplan -var 'image_name=ghost:alpine' $ terraform apply -auto-approve tfplan

- Destroy the image:

$ terraform destroy -auto-approve -var 'image_name=ghost:alpine'

- The Container Module

In this section, we will continue working with Terraform modules by breaking out the container code into its own module.

Go to the container directory:

$ cd modules/container

$ cat << EOF > main.tf

# Start the Container

resource "docker_container" "container_id" {

name = "${var.container_name}"

image = "${var.image}"

ports {

internal = "${var.int_port}"

external = "${var.ext_port}"

}

}

EOF

$ cat << EOF > variables.tf

variable "container_name" {}

variable "image" {}

variable "int_port" {}

variable "ext_port" {}

EOF

$ cat << EOF > outputs.tf

# Output the IP Address of the Container

output "ip" {

value = "${docker_container.container_id.ip_address}"

}

output "container_name" {

value = "${docker_container.container_id.name}"

}

EOF

- Initialize, validate, plan, and apply:

$ terraform init $ terraform plan -out=tfplan -var 'container_name=blog' -var 'image=ghost:alpine' -var 'int_port=2368' -var 'ext_port=80' $ terraform apply tfplan

- The Root Module

In this section, we will refactor the root module to use the image and container modules we created in the previous two sections.

Go to the module directory:

$ cd ~/terraform/basics/modules/

$ touch {main.tf,variables.tf,outputs.tf}

$ cat << EOF > main.tf

# Download the image

module "image" {

source = "./image"

image_name = "${var.image_name}"

}

# Start the container

module "container" {

source = "./container"

image = "${module.image.image_out}"

container_name = "${var.container_name}"

int_port = "${var.int_port}"

ext_port = "${var.ext_port}"

}

EOF

$ cat << EOF > variables.tf

variable "container_name" {

description = "Name of the container."

default = "blog"

}

variable "image_name" {

description = "Image for container."

default = "ghost:latest"

}

variable "int_port" {

description = "Internal port for container."

default = "2368"

}

variable "ext_port" {

description = "External port for container."

default = "80"

}

EOF

$ cat << EOF > outputs.tf

output "ip" {

value = "${module.container.ip}"

}

output "container_name" {

value = "${module.container.container_name}"

}

EOF

- Initialize, play, and apply:

$ terraform init $ terraform plan -out=tfplan $ terraform apply tfplan

- Destroy the deployment:

$ terraform destroy -auto-approve

Docker

Managing Docker Networks

In this section, we will use the docker_network Terraform resource.

- Set up the environment:

$ mkdir -p ~/terraform/docker/networks

$ cd terraform/docker/networks

$ touch {variables.tf,image.tf,network.tf,main.tf}

$ cat << EOF > variables.tf

variable "mysql_root_password" {

description = "The MySQL root password."

default = "P4sSw0rd0!"

}

variable "ghost_db_username" {

description = "Ghost blog database username."

default = "root"

}

variable "ghost_db_name" {

description = "Ghost blog database name."

default = "ghost"

}

variable "mysql_network_alias" {

description = "The network alias for MySQL."

default = "db"

}

variable "ghost_network_alias" {

description = "The network alias for Ghost"

default = "ghost"

}

variable "ext_port" {

description = "Public port for Ghost"

default = "8080"

}

EOF

$ cat << EOF > images.tf

resource "docker_image" "ghost_image" {

name = "ghost:alpine"

}

resource "docker_image" "mysql_image" {

name = "mysql:5.7"

}

EOF

$ cat << EOF > networks.tf

resource "docker_network" "public_bridge_network" {

name = "public_ghost_network"

driver = "bridge"

}

resource "docker_network" "private_bridge_network" {

name = "ghost_mysql_internal"

driver = "bridge"

internal = true

}

EOF

$ cat << EOF > main.tf

resource "docker_container" "blog_container" {

name = "ghost_blog"

image = "${docker_image.ghost_image.name}"

env = [

"database__client=mysql",

"database__connection__host=${var.mysql_network_alias}",

"database__connection__user=${var.ghost_db_username}",

"database__connection__password=${var.mysql_root_password}",

"database__connection__database=${var.ghost_db_name}"

]

ports {

internal = "2368"

external = "${var.ext_port}"

}

networks_advanced {

name = "${docker_network.public_bridge_network.name}"

aliases = ["${var.ghost_network_alias}"]

}

networks_advanced {

name = "${docker_network.private_bridge_network.name}"

aliases = ["${var.ghost_network_alias}"]

}

}

resource "docker_container" "mysql_container" {

name = "ghost_database"

image = "${docker_image.mysql_image.name}"

env = [

"MYSQL_ROOT_PASSWORD=${var.mysql_root_password}"

]

networks_advanced {

name = "${docker_network.private_bridge_network.name}"

aliases = ["${var.mysql_network_alias}"]

}

}

EOF

- Initialize, validate, plan, and apply:

$ terraform init $ terraform validate $ terraform plan -out=tfplan -var 'ext_port=8082' $ terraform apply tfplan

- Destroy the environment:

$ terraform destroy -auto-approve -var 'ext_port=8082'

- Fixing main.tf

We need to make sure the MySQL container starts before the Blog container does, otherwise the Blog container will crash.

resource "docker_container" "mysql_container" {

name = "ghost_database"

image = "${docker_image.mysql_image.name}"

env = [

"MYSQL_ROOT_PASSWORD=${var.mysql_root_password}"

]

networks_advanced {

name = "${docker_network.private_bridge_network.name}"

aliases = ["${var.mysql_network_alias}"]

}

}

resource "null_resource" "sleep" {

depends_on = ["docker_container.mysql_container"]

provisioner "local-exec" {

command = "sleep 15s"

}

}

resource "docker_container" "blog_container" {

name = "ghost_blog"

image = "${docker_image.ghost_image.name}"

depends_on = ["null_resource.sleep", "docker_container.mysql_container"]

env = [

"database__client=mysql",

"database__connection__host=${var.mysql_network_alias}",

"database__connection__user=${var.ghost_db_username}",

"database__connection__password=${var.mysql_root_password}",

"database__connection__database=${var.ghost_db_name}"

]

ports {

internal = "2368"

external = "${var.ext_port}"

}

networks_advanced {

name = "${docker_network.public_bridge_network.name}"

aliases = ["${var.ghost_network_alias}"]

}

networks_advanced {

name = "${docker_network.private_bridge_network.name}"

aliases = ["${var.ghost_network_alias}"]

}

}

- Build a plan and apply:

$ terraform plan -out=tfplan -var 'ext_port=8082' $ terraform apply tfplan

Managing Docker Volumes

In this section, we will add a volume to our Ghost Blog/MySQL setup.

- Destroy the existing environment:

$ terraform destroy -auto-approve -var 'ext_port=8082'

- Setup an environment:

$ cp -r ~/terraform/docker/networks ~/terraform/docker/volumes

$ cd ../volumes/

$ cat << EOF > volumes.tf

resource "docker_volume" "mysql_data_volume" {

name = "mysql_data"

}

EOF

$ cat << EOF > main.tf

resource "docker_container" "mysql_container" {

name = "ghost_database"

image = "${docker_image.mysql_image.name}"

env = [

"MYSQL_ROOT_PASSWORD=${var.mysql_root_password}"

]

volumes {

volume_name = "${docker_volume.mysql_data_volume.name}"

container_path = "/var/lib/mysql"

}

networks_advanced {

name = "${docker_network.private_bridge_network.name}"

aliases = ["${var.mysql_network_alias}"]

}

}

resource "null_resource" "sleep" {

depends_on = ["docker_container.mysql_container"]

provisioner "local-exec" {

command = "sleep 15s"

}

}

resource "docker_container" "blog_container" {

name = "ghost_blog"

image = "${docker_image.ghost_image.name}"

depends_on = ["null_resource.sleep", "docker_container.mysql_container"]

env = [

"database__client=mysql",

"database__connection__host=${var.mysql_network_alias}",

"database__connection__user=${var.ghost_db_username}",

"database__connection__password=${var.mysql_root_password}",

"database__connection__database=${var.ghost_db_name}"

]

ports {

internal = "2368"

external = "${var.ext_port}"

}

networks_advanced {

name = "${docker_network.public_bridge_network.name}"

aliases = ["${var.ghost_network_alias}"]

}

networks_advanced {

name = "${docker_network.private_bridge_network.name}"

aliases = ["${var.ghost_network_alias}"]

}

}

EOF

- Initialize, validate, plan, and apply:

$ terraform init $ terraform validate $ terraform plan -out=tfplan -var 'ext_port=8082' $ terraform apply tfplan

- List Docker volumes:

$ docker volume inspect mysql_data

- List the data in mysql_data:

$ sudo ls /var/lib/docker/volumes/mysql_data/_data

- Destroy the environment:

$ terraform destroy -auto-approve -var 'ext_port=8082'

Creating Swarm Services

In this section, we will convert our Ghost and MySQL containers over to using Swarm services. Swarm services are a more production-ready way of running containers (but not as good as using Kubernets).

- Setup the environment:

$ cp -r volumes/ services

$ cd services

$ cat << EOF > variables.tf

variable "mysql_root_password" {

description = "The MySQL root password."

default = "P4sSw0rd0!"

}

variable "ghost_db_username" {

description = "Ghost blog database username."

default = "root"

}

variable "ghost_db_name" {

description = "Ghost blog database name."

default = "ghost"

}

variable "mysql_network_alias" {

description = "The network alias for MySQL."

default = "db"

}

variable "ghost_network_alias" {

description = "The network alias for Ghost"

default = "ghost"

}

variable "ext_port" {

description = "The public port for Ghost"

}

EOF

$ cat << EOF > images.tf

resource "docker_image" "ghost_image" {

name = "ghost:alpine"

}

resource "docker_image" "mysql_image" {

name = "mysql:5.7"

}

EOF

$ cat << EOF > networks.tf

resource "docker_network" "public_bridge_network" {

name = "public_network"

driver = "overlay"

}

resource "docker_network" "private_bridge_network" {

name = "mysql_internal"

driver = "overlay"

internal = true

}

EOF

$ cat << EOF > volumes.tf

resource "docker_volume" "mysql_data_volume" {

name = "mysql_data"

}

EOF

$ cat << EOF > main.tf

resource "docker_service" "ghost-service" {

name = "ghost"

task_spec {

container_spec {

image = "${docker_image.ghost_image.name}"

env {

database__client = "mysql"

database__connection__host = "${var.mysql_network_alias}"

database__connection__user = "${var.ghost_db_username}"

database__connection__password = "${var.mysql_root_password}"

database__connection__database = "${var.ghost_db_name}"

}

}

networks = [

"${docker_network.public_bridge_network.name}",

"${docker_network.private_bridge_network.name}"

]

}

endpoint_spec {

ports {

target_port = "2368"

published_port = "${var.ext_port}"

}

}

}

resource "docker_service" "mysql-service" {

name = "${var.mysql_network_alias}"

task_spec {

container_spec {

image = "${docker_image.mysql_image.name}"

env {

MYSQL_ROOT_PASSWORD = "${var.mysql_root_password}"

}

mounts = [

{

target = "/var/lib/mysql"

source = "${docker_volume.mysql_data_volume.name}"

type = "volume"

}

]

}

networks = ["${docker_network.private_bridge_network.name}"]

}

}

EOF

- Initialize, validate, plan, and apply:

$ terraform init $ terraform validate $ terraform plan -out=tfplan -var 'ext_port=8082' $ terraform apply tfplan $ docker service ls $ docker container ls

- Destroy the environment:

$ terraform destroy -auto-approve -var 'ext_port=8082'

Docker Secrets

In this section, we will explore using Terraform to store sensitive data, by using Docker Secrets.

- Setup the environment:

$ mkdir secrets && cd secrets

- Encode the password with Base64:

$ echo "p4sSWoRd0!" | base64

- Create Terraform files for this project:

$ cat << EOF > variables.tf

variable "mysql_root_password" {

default = "cDRzU1dvUmQwIQo="

}

variable "mysql_db_password" {

default = "cDRzU1dvUmQwIQo="

}

EOF

$ cat << EOF > images.tf

resource "docker_image" "mysql_image" {

name = "mysql:5.7"

}

EOF

$ cat << EOF > secrets.tf

resource "docker_secret" "mysql_root_password" {

name = "root_password"

data = "${var.mysql_root_password}"

}

resource "docker_secret" "mysql_db_password" {

name = "db_password"

data = "${var.mysql_db_password}"

}

EOF

$ cat << EOF > networks.tf

resource "docker_network" "private_overlay_network" {

name = "mysql_internal"

driver = "overlay"

internal = true

}

EOF

$ cat << EOF > volumes.tf

resource "docker_volume" "mysql_data_volume" {

name = "mysql_data"

}

EOF

$ cat << EOF > main.tf

resource "docker_service" "mysql-service" {

name = "mysql_db"

task_spec {

container_spec {

image = "${docker_image.mysql_image.name}"

secrets = [

{

secret_id = "${docker_secret.mysql_root_password.id}"

secret_name = "${docker_secret.mysql_root_password.name}"

file_name = "/run/secrets/${docker_secret.mysql_root_password.name}"

},

{

secret_id = "${docker_secret.mysql_db_password.id}"

secret_name = "${docker_secret.mysql_db_password.name}"

file_name = "/run/secrets/${docker_secret.mysql_db_password.name}"

}

]

env {

MYSQL_ROOT_PASSWORD_FILE = "/run/secrets/${docker_secret.mysql_root_password.name}"

MYSQL_DATABASE = "mydb"

MYSQL_PASSWORD_FILE = "/run/secrets/${docker_secret.mysql_db_password.name}"

}

mounts = [

{

target = "/var/lib/mysql"

source = "${docker_volume.mysql_data_volume.name}"

type = "volume"

}

]

}

networks = [

"${docker_network.private_overlay_network.name}"

]

}

}

EOF

- Initialize, validate, plan, and apply:

$ terraform init $ terraform validate $ terraform plan -out=tfplan $ terraform apply tfplan

- Find the MySQL container:

$ docker container ls

- Use the exec command to log into the MySQL container:

$ docker container exec -it [CONTAINER_ID] /bin/bash

- Access MySQL:

$ mysql -u root -p

- Destroy the environment:

$ terraform destroy -auto-approve

Concepts

Provisioners

- File uploads

resource "aws_instance" "example" {

ami = "${lookup(var.AMIS, var.AWS_REGION)}"

instance_type = "t2.micro"

provisioner "file" {

source = "app.conf"

destination = "/etc/myapp.conf"

}

}

- Connection

# Copies the file as the instance_username user using SSH

provisioner "file" {

source = "conf/myapp.conf"

destination = "/etc/myapp.conf"

connection {

type = "ssh"

user = "${var.instance_username}"

password = "${var.instance_password}"

}

}

- Copy a script to the instance and execute it:

resource "aws_key_pair" "mykey" {

key_name = "christoph-aws-key"

#public_key = "ssh-rsa my-public-key"

public_key = "${file("${var.PATH_TO_PUBLIC_KEY}")}"

}

resource "aws_instance" "example" {

ami = "${lookup(var.AMIS, var.AWS_REGION)}"

instance_type = "t2.micro"

key_name = "${aws_key_pair.mykey.key_name}"

provisioner "file" {

source = "src/script.sh"

destination = "/tmp/script.sh"

}

provisioner "remote-exec" {

inline = [

"chmod +x /tmp/script.sh",

"sudo /tmp/script.sh"

]

}

connection {

type = "ssh"

user = "${var.instance_username}"

private_key = "${file("${var.PATH_TO_PRIVATE_KEY}")}"

}

}

Outputs

Outputs define values that will be highlighted to the user when Terraform applies, and can be queried easily using the output command.

resource "aws_instance" "example" {

ami = "${lookup(var.AMIS, var.AWS_REGION)}"

instance_type = "t2.micro"

}

output "ip" {

value = "${aws_instance.example.public_ip}"

}

You can refer to any attribute by specifying the following elements in your variable:

- The resource type (e.g.,

aws_instance) - The resource name (e.g.,

example) - The attribute name (e.g.,

public_ip)

See here for a complete list of attributes for AWS EC2 instances.

- You can also use the attributes found in a script:

resource "aws_instance" "example" {

ami = "${lookup(var.AMIS, var.AWS_REGION)}"

instance_type = "t2.micro"

provisioner "local-exec" {

command = "echo ${aws_instance.example.private_ip} >> private_ips.txt"

}

}

Terraform state

- Terraform keeps the remote state of the infrastructure

- It stores it in a file called

terraform.tfstate - There is also a backup of the previous state in

terraform.tfstate.backup - When you execute

terraform apply, a newterraform.tfstateand backup are created - This is how Terraform keeps track of the remote state

- If the remote state changes and you run

terraform applyagain, Terraform will make changes to meet the correct remote state again. - E.g., you manually terminate an instance that is managed by Terraform, after you run

terraform apply, it will be started again.

- If the remote state changes and you run

- You can keep the

terraform.tfstatein version control (e.g., git).- This will give you a history of your

terraform.tfstatefile (which is just a big JSON file) - This allows you to collaborate with other team members (however, you can get conflicts when two or more people make changes at the same time)

- This will give you a history of your

- Local state works well with simple setups. However, if your project involves multiple team members working on a larger setup, it is better to store your state remotely

- The Terraform state can be saved remotely, using the backend functionality in Terraform.

- Using a remote store for the Terraform state will ensure that you always have the latest version of the state.

- It avoids having commit and push the

terraform.tfstatefile to version control. - However, make sure the Terraform remote store you choose supports locking! (note: both s3 and consul support locking)

- The default state is a local backend (the local Terraform state file)

- Other backends include:

- AWS S3 (with a locking mechanism using DynamoDB)

- Consul (with locking)

- Terraform Enterprise (the commercial solution)

- Using the backend functionality has definite benefits:

- Working in a team, it allows for collaboration (the remote state will always be available for the whole team)

- The state file is not stored locally and possible sensitive information is only stored in the remote state

- Some backends will enable remote operations. The

terraform applywill then run completely remotely. These are called enhanced backends.

- There are two steps to configure a remote state:

- Add the back code to a

.tffile - Run the initialization process

- Add the back code to a

- Consul backend

- To configure a Consul remote store, you can add a file (

backend.tf) with the following contents:

terraform {

backend "consul" {

address = "demo.consul.io" # hostname of consul cluster

path = "terraform/myproject"

}

}

- S3 backend

- Create a

backend.tffile with (note: you cannot use Terraform variables in your backend .tf file):

terraform {

backend "s3" {

bucket = "mybucket"

key = "terraform/myproject.json"

region = "us-west-2"

}

}

- The initialize with:

$ terraform init

$ cat myproject.json | jq -crM '.modules[].resources."aws_instance.example".primary.attributes.public_ip' 1.2.3.4

- Configure a read-only remote store directly in the

.tffile (note: this is actually a "datasource"):

data "terraform_remote_state" "aws-state" {

backend = "s3"

config {

bucket = "mybucket"

key = "terraform.tfstate"

access_key = "${var.AWS_ACCESS_KEY}"

secret_key = "${var.AWS_SECRET_KEY}"

region = "${var.AWS_REGION}"

}

}

Datasources

- For certain providers (e.g., AWS), Terraform provides "datasources"

- Datasources provide you with dynamic information

- A lot of data is available from AWS in a structure format using their API (e.g., list of AMIs, list of availability zones, etc.)

- Terraform also exposes this information using datasources

- Another example is a datasource that provides you with a list of all IP addresses in use by AWS (useful if you want to filter traffic based on an AWS region)

- E.g., Allow all traffic from AWS EC2 instances in Europe

- Filtering traffic in AWS can also be done using security groups

- Incoming and outgoing traffic can be filtered by protocol, IP range, and port

- Example datasource:

data "aws_ip_ranges" "european_ec2" {

regions = ["eu-west-1", "eu-central-1"]

services = ["ec2"]

}

resource "aws_security_group" "from_europe" {

name = "from_europe"

ingress {

from_port = "443"

to_port = "443"

protocol = "tcp"

cidr_blocks = ["${data.aws_ip.ranges.european_ec2.cidr_blocks}"]

}

tags {

CreateDate = "${data.aws_ip_ranges.european_ec2.create_date}"

SyncToken = "${data.aws_ip_ranges.european_ec2.sync_token}"

}

}

Template provider

- The template provider can help with creating customized configuration files

- You can build templates based on variables from Terraform resource attributes (e.g., a public IP address)

- The result is a string, which can be used as a variable in Terraform

- The string contains a template (e.g., a configuration file)

- Can be used to create generic templates or cloud init configs

- In AWS, you can pass commands that need to be executed when the instance starts for the first time (called "user-data")

- If you want to pass user-data that depends on other information in Terraform (e.g., IP addresses), you can use the provider template

- Example template provider

- First, create a template file:

$ cat << EOF > templates/init.tpl

#!/bin/bash

echo "database-ip = ${myip}" >> /etc/myapp.config

EOF

- Then, create a

template_fileresource that will read the template file and replace${myip}with the IP address of an AWS instance created by Terraform:

data "template_file" "my-template" {

template = "${file("templates/init.tpl")}"

vars {

myip = "${aws_instance.database1.private_ip}"

}

}

- Finally, use the "

my-template" resource when creating a new instance:

resource "aws_instance" "web" {

...

user_data = "${data.template_file.my-template.rendered}"

...

}

When Terraform runs, it will see that it first need to spin up the database1 instance, then generate the template, and only then spin up the web instance.

The web instance will have the template injected in the user-data, and when it launches, the user-data will create a file (/etc/myapp.config) with the IP address of the database.

Modules

- You can use modules to make your Terraform project more organized

- You can use third-party modules (e.g., modules from GitHub)

- You can re-use parts of your code (e.g., to set up a network in AWS -> VPC)

- Example of using a module from GitHub:

module "module-example" {

source = "github.com/foobar/terraform-module-example"

}

- Use a module from a local folder:

module "module-example" {

source = "./module-example"

}

- Pass arguments to a module:

module "module-example" {

source = "./module-example"

region = "us-west-2"

ip-range = "10.0.0.0/8"

cluster-size = "3"

}

- Inside the module folder (e.g.,

module-example), you just have the normal Terraform files:

$ cat module-example/vars.tf

# the module input parameters

variable "region" {}

variable "ip-range" {}

variable "cluster-size {}

$ cat module-example/cluster.tf

# variables can be used here

resource "aws_instance" "instance-1" {}

...

$ cat module-example/output.tf

output "aws-cluster" {

value = "${aws_instance.instance-1.public_ip},${aws_instance.instance-2.public_ip},...

}

- Use the output from the module in the main part of your code:

output "some-output" {

value = "${module.module-example.aws-cluster}"

}

Terraform 0.11 vs 0.12

- Terraform 0.12 Enhancements

- Configuration is easier to read and reason about

- Consistent, predictable behaviour in complex functions

- Improved support for loosely-coupled modules

- Terraform 0.11

variable "count" { default = 1 }

variable "default_prefix { default = "linus" }

variable "zoo_enabled" {

default = "0"

}

variable "prefix_list" {

default = []

}

resource "random_pet" "my_pet" {

count = "${var.count}"

prefix = "${var.zoo_enabled == "0" ? var.default_prefix : element(concat(var.prefix_list, list("")), count.index)}"

}

- Terraform 0.12

variable "pet_count" { default = 1 }

variable "default_prefix" { default = "linus" }

variable "zoo_enabled" {

default = false

}

variable "prefix_list" {

default = []

}

resource "random_pet" "my_pet" {

count = var.pet_count

prefix = var.zoo_enabled ? element(var.prefix_list, count.index) : var.default_prefix

}

- For-each in Terraform 0.12

locals {

standard_tags = {

Component = "user-service"

Environment = "production"

}

resource "aws_autoscaling_group" "example" {

# ...

dynamic "tag" {

for_each = local.standard_tags

content {

key = tag.key

value = tag.value

propagate_at_launch = true

}

}

}

Install Terraform Enterprise (TFE)

Pre-Install Setup

- Initial setup:

$ sudo yum update -y $ sudo yum install -y yum-utils device-mapper-persistent-data lvm2 $ sudo yum install https://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm $ sudo setenforce 0 $ sudo vi /etc/selinux/config # set to 'permissive' $ sestatus

- Install Docker (note: You must install a version of Docker supported by TFE):

$ sudo yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo $ sudo yum list docker-ce-cli.x86_64 --showduplicates | sort -r $ sudo yum install docker-ce-18.09.9-3.el7 docker-ce-cli-18.09.9-3.el7 -y $ sudo usermod -aG docker $(whoami) $ sudo systemctl enable docker && sudo systemctl start docker

- Test the Docker setup:

$ docker version $ docker ps $ docker run hello-world

- Lock down the version of Docker installed (i.e., prevent

yum updatefrom updating to newer versions):

$ sudo yum -y install yum-versionlock $ sudo yum versionlock add docker-ce docker-ce-cli $ yum versionlock list docker-ce docker-ce-cli

- Create a secrets file for API calls to CloudFlare:

$ mkdir .secrets $ chmod 0700 .secrets/ $ cat << EOF > .secrets/cloudflare.ini dns_cloudflare_email = "cloudsupport@example.com" dns_cloudflare_api_key = "<redacted>" EOF $ chmod 0600 .secrets/cloudflare.ini

- Install certbot:

$ sudo yum install -y certbot \

python2-certbot-nginx \ # If using Nginx

python2-certbot-dns-cloudflare.noarch # If using CloudFlare

- Generate Let's Encrypt TLS certificates (for use with CloudFlare):

$ sudo certbot certonly \

--dns-cloudflare \

--dns-cloudflare-credentials ~/.secrets/cloudflare.ini \

-d terraform.example.com

The above certbot command will create the following (relevant) files:

/etc/letsencrypt/live/terraform.example.com/fullchain.pem /etc/letsencrypt/live/terraform.example.com/privkey.pem

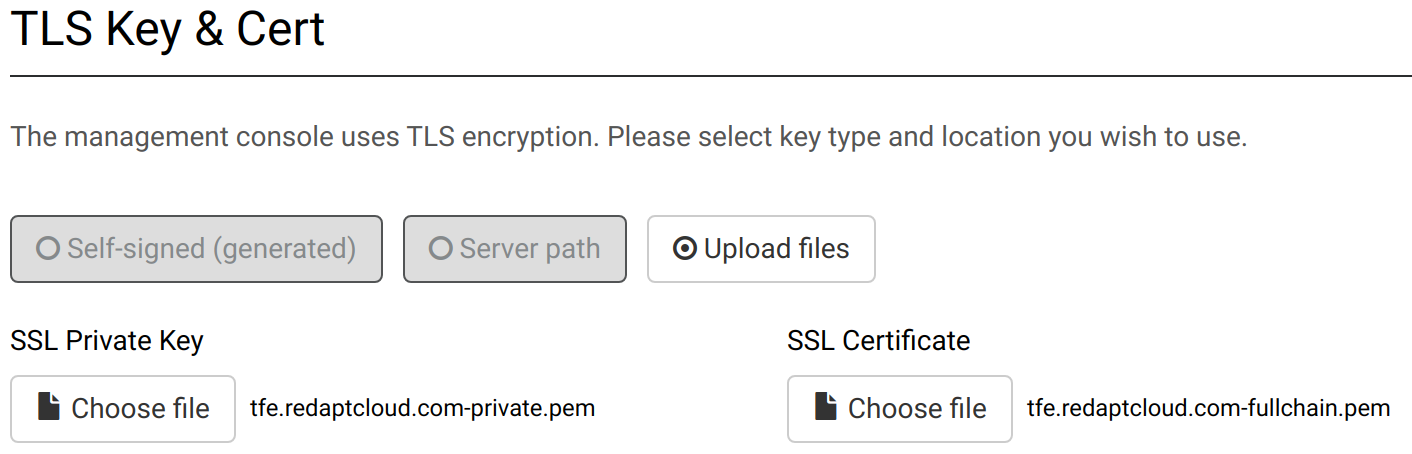

You will use the above files during the TFE install via the UI as shown here:

- Create aliases for ReplicateD (useful for CLI operations):

$ cat << EOF >> ~/.bashrc source /etc/replicated.alias EOF

Install Terraform Enterprise (TFE)

- Install TFE:

$ curl https://install.terraform.io/ptfe/stable | sudo bash

- Check on Docker containers deployed:

$ docker ps --format '{{.Names}} {{.Ports}}'

hardcore_poitras

ptfe_archivist 0.0.0.0:7675->7675/tcp

ptfe_sidekiq

ptfe_registry_api

ptfe_atlas 0.0.0.0:9292->9292/tcp

ptfe_registry_worker

ptfe_build_manager

ptfe_vault 0.0.0.0:8200->8200/tcp

ptfe_postgres 0.0.0.0:5432->5432/tcp

rabbitmq 0.0.0.0:5672->5672/tcp, 0.0.0.0:32780->4369/tcp, 0.0.0.0:32779->5671/tcp, 0.0.0.0:32778->25672/tcp

ptfe_nginx 0.0.0.0:80->80/tcp, 0.0.0.0:443->443/tcp, 0.0.0.0:23001->8080/tcp

ptfe_backup_restore 0.0.0.0:23009->23009/tcp

ptfe_ingress 0.0.0.0:7586->7586/tcp

ptfe_nomad 0.0.0.0:23020->23020/tcp

influxdb 0.0.0.0:8086->8086/tcp

telegraf 0.0.0.0:23010->23010/udp, 0.0.0.0:32771->8092/udp, 0.0.0.0:32777->8094/tcp, 0.0.0.0:32770->8125/udp

ptfe_redis 0.0.0.0:6379->6379/tcp

ptfe_state_parser 0.0.0.0:7588->7588/tcp

replicated-statsd 0.0.0.0:32776->2003/tcp, 0.0.0.0:32775->2004/tcp, 0.0.0.0:32774->2443/tcp, 0.0.0.0:32769->8125/udp

ptfe-health-check 0.0.0.0:23005->23005/tcp

retraced-processor 3000/tcp

retraced-api 0.0.0.0:9873->3000/tcp

retraced-cron 3000/tcp

retraced-postgres 5432/tcp

retraced-nsqd 4150-4151/tcp, 4160-4161/tcp, 4170-4171/tcp

replicated-premkit 80/tcp, 443/tcp, 2080/tcp, 0.0.0.0:9880->2443/tcp

replicated 0.0.0.0:9874-9879->9874-9879/tcp

replicated-ui 0.0.0.0:8800->8800/tcp

replicated-operator

$ sudo du -hs /var/lib/docker

11G /var/lib/docker

Troubleshooting

Since we just had TFE create a self-signed cert, we cannot do the following:

$ cat backend.tf

terraform {

backend "remote" {

hostname = "tfe.example.com"

organization = "Redapt"

token = "<redacted>"

workspaces {

name = "tfe-rancher-dev-networking"

}

}

}

$ terraform init

Error initializing new backend:

Error configuring the backend "remote": Failed to request discovery document: Get https://tfe.example.com/.well-known/terraform.json: x509: certificate signed by unknown authority

$ terraform login tfe.example.com Error: Service discovery failed for tfe.example.com Failed to request discovery document: Get https://tfe.example.com/.well-known/terraform.json: x509: certificate signed by unknown authority.

So, let's use Let's Encrypt to generate a "valid" TLS cert.

Let's Encrypt

The following are my troubleshooting notes for how to get Let's Encrypt integrated into Terraform Enterprise (TFE) using certbot.

This section of the article will show you how to setup Let's Encrypt (LE) TLS certificates for use with Terraform Enterprise (TFE).

- Install certbot:

$ sudo yum install https://dl.fedoraproject.org/pub/epel/epel-release-latest-7.noarch.rpm $ sudo yum install certbot python2-certbot-nginx python2-certbot-dns-cloudflare

- Use certbot to create Let's Encrypt TLS certificates (and register the DNS changes with CloudFlare):

$ mkdir ~/.secrets

$ cat << EOF > ~/.secrets/cloudflare.ini

dns_cloudflare_email = "cloudsupport@example.com"

dns_cloudflare_api_key = "<redacted>"

EOF

$ chmod 0700 ~/.secrets && chmod 0600 ~/.secrets/cloudflare.ini

$ sudo certbot certonly \

--dns-cloudflare \

--dns-cloudflare-credentials \

~/.secrets/cloudflare.ini \

-d tfe.example.com

Saving debug log to /var/log/letsencrypt/letsencrypt.log

Plugins selected: Authenticator dns-cloudflare, Installer None

Starting new HTTPS connection (1): acme-v02.api.letsencrypt.org

Obtaining a new certificate

Performing the following challenges:

dns-01 challenge for tfe.example.com

Starting new HTTPS connection (1): api.cloudflare.com

Waiting 10 seconds for DNS changes to propagate

Waiting for verification...

Cleaning up challenges

Starting new HTTPS connection (1): api.cloudflare.com

IMPORTANT NOTES:

- Congratulations! Your certificate and chain have been saved at:

/etc/letsencrypt/live/tfe.example.com/fullchain.pem

Your key file has been saved at:

/etc/letsencrypt/live/tfe.example.com/privkey.pem

Your cert will expire on 2020-08-11. To obtain a new or tweaked

version of this certificate in the future, simply run certbot

again. To non-interactively renew *all* of your certificates, run

"certbot renew"

- Upload the following TLS cert files to the TFE Console UI (https://tfe.example.com:8800/console/settings):

/etc/letsencrypt/live/tfe.example.com/privkey.pem /etc/letsencrypt/live/tfe.example.com/fullchain.pem

- Check connections:

$ curl -ks "https://tfe.example.com/.well-known/terraform.json" | jq .

{

"modules.v1": "/api/registry/v1/modules/",

"state.v2": "/api/v2/",

"tfe.v2": "/api/v2/",

"tfe.v2.1": "/api/v2/",

"tfe.v2.2": "/api/v2/",

"versions.v1": "https://checkpoint-api.hashicorp.com/v1/versions/"

}

$ true | openssl s_client -connect vpn01.example.com:443 2>/dev/null

CONNECTED(00000005)

---

Certificate chain

0 s:CN = vpn01.example.com

i:C = US, O = Let's Encrypt, CN = Let's Encrypt Authority X3

1 s:C = US, O = Let's Encrypt, CN = Let's Encrypt Authority X3

i:O = Digital Signature Trust Co., CN = DST Root CA X3

$ true | openssl s_client -connect tfe.example.com:443 2>/dev/null

CONNECTED(00000005)

---

Certificate chain

0 s:C = USA, O = "Replicated, Inc.", OU = On-Prem Daemon, CN = tfe.example.com

i:C = USA, O = Replicated-aad03ef3, OU = CA

$ openssl rsa -check -noout -in tfe.example.com-private.pem

RSA key ok

$ openssl x509 -in tfe.example.com-fullchain.pem -noout -issuer

issuer=C = US, O = Let's Encrypt, CN = Let's Encrypt Authority X3

$ openssl x509 -in tfe.example.com-fullchain.pem -text -noout | head

Certificate:

Data:

Version: 3 (0x2)

Serial Number:

04:7c:de:e0:d6:8f:28:ec:85:ec:24:23:a6:78:99:be:28:10

Signature Algorithm: sha256WithRSAEncryption

Issuer: C = US, O = Let's Encrypt, CN = Let's Encrypt Authority X3

Validity

Not Before: May 13 19:10:49 2020 GMT

Not After : Aug 11 19:10:49 2020 GMT

- Check DNS records:

$ dig TXT _acme-challenge.tfe.example.com +short # Should return something that looks like this: "GungAThu5sg63DuvJ1U3egVgRIyhzLDQ7MQylzEW1Z4"

Reboot VM

Reboot the VM in order to make sure that everything is persistent.

$ docker logs replicated-ui

INFO 2020-05-13T22:01:20+00:00 daemon/daemon.go:160 Starting Replicated UI version 2.42.5 (git="313c050", date="2020-03-17 02:52:33 +0000 UTC")

WARN 2020-05-13T22:01:20+00:00 ipc/call.go:225 Cannot connect to the Replicated daemon. Is 'replicated -d' running on this host?

INFO 2020-05-13T22:01:20+00:00 daemon/daemon.go:521 Retrieving TLS cert from daemon...

WARN 2020-05-13T22:01:20+00:00 daemon/daemon.go:545 Unable to get console settings from daemon: Cannot connect to the Replicated daemon. Is 'replicated -d' running on this host?

WARN 2020-05-13T22:01:20+00:00 daemon/daemon.go:546 Continuing to try...

$ sudo systemctl status replicated

● replicated.service - Replicated Service

Loaded: loaded (/etc/systemd/system/replicated.service; enabled; vendor preset: disabled)

Active: activating (auto-restart) (Result: exit-code) since Wed 2020-05-13 22:06:48 UTC; 4s ago

Process: 21727 ExecStop=/usr/bin/docker stop replicated (code=exited, status=0/SUCCESS)

Process: 21249 ExecStart=/usr/bin/docker run --name=replicated -p 9874-9879:9874-9879/tcp -u 1001:994 -v /var/lib/replicated:/var/lib/replicated -v /var/run/docker.sock:/host/var/run/docker.sock -v /proc:/host/proc:ro -v /etc:/host/etc:ro -v /etc/os-release:/host/etc/os-release:ro -v /etc/pki/ca-trust/extracted/pem/tls-ca-bundle.pem:/etc/ssl/certs/ca-certificates.crt -v /var/run/replicated:/var/run/replicated --security-opt label=type:spc_t -e LOCAL_ADDRESS=${PRIVATE_ADDRESS} -e RELEASE_CHANNEL=${RELEASE_CHANNEL} $REPLICATED_OPTS quay.io/replicated/replicated:current (code=exited, status=1/FAILURE)

Process: 21246 ExecStartPre=/bin/chmod -R 755 /var/lib/replicated/tmp (code=exited, status=0/SUCCESS)

Process: 21243 ExecStartPre=/bin/chown -R 1001:994 /var/run/replicated /var/lib/replicated (code=exited, status=0/SUCCESS)

Process: 21241 ExecStartPre=/bin/mkdir -p /var/run/replicated /var/lib/replicated /var/lib/replicated/statsd (code=exited, status=0/SUCCESS)

Process: 21230 ExecStartPre=/usr/bin/docker rm -f replicated (code=exited, status=0/SUCCESS)

Main PID: 21249 (code=exited, status=1/FAILURE)

May 13 22:06:48 hashi-tfe systemd[1]: Unit replicated.service entered failed state.

May 13 22:06:48 hashi-tfe systemd[1]: replicated.service failed.

- Journal logs for Docker service:

$ sudo journalctl -fu docker May 13 22:30:03 hashi-tfe dockerd[1567]: time="2020-05-13T22:30:03.961044696Z" level=error msg="Handler for POST /auth returned error: Get https://192.168.254.221:9874/v2/: dial tcp 192.168.254.221:9874: connect: connection refused" May 13 22:30:04 hashi-tfe dockerd[1567]: time="2020-05-13T22:30:04.054437153Z" level=info msg="ignoring event" module=libcontainerd namespace=moby topic=/tasks/delete type="*events.TaskDelete" May 13 22:30:04 hashi-tfe dockerd[1567]: time="2020-05-13T22:30:04.648018267Z" level=warning msg="Failed to allocate and map port 9880-9880: Bind for 0.0.0.0:9880 failed: port is already allocated" May 13 22:30:04 hashi-tfe dockerd[1567]: time="2020-05-13T22:30:04.717243067Z" level=error msg="4e24998f3bfe2f2ebd0fb7d5b693344a9c52e5e77e765cc37c4375c9df4ada14 cleanup: failed to delete container from containerd: no such container" May 13 22:30:04 hashi-tfe dockerd[1567]: time="2020-05-13T22:30:04.717368312Z" level=error msg="Handler for POST /containers/4e24998f3bfe2f2ebd0fb7d5b693344a9c52e5e77e765cc37c4375c9df4ada14/start returned error: driver failed programming external connectivity on endpoint replicated-premkit (8ef00bad7c58a67dbc4bde46af4dfe9f66a2297a047ee013063ba63e3b95e1e1): Bind for 0.0.0.0:9880 failed: port is already allocated"

Miscellaneous

- Convert YAML to HCL:

$ echo 'yamldecode(file("my-manifest-file.yaml"))' | terraform console

See also: tfk8s

Bash completion

$ cat << EOF | sudo tee /etc/bash_completion.d/terraform

_terraform()

{

local cmds cur colonprefixes

cmds="apply destroy fmt get graph import init \

output plan push refresh remote show taint \

untaint validate version state"

COMPREPLY=()

cur=${COMP_WORDS[COMP_CWORD]}

# Work-around bash_completion issue where bash interprets a colon

# as a separator.

# Work-around borrowed from the darcs work-around for the same

# issue.

colonprefixes=${cur%"${cur##*:}"}

COMPREPLY=( $(compgen -W '$cmds' -- $cur))

local i=${#COMPREPLY[*]}

while [ $((--i)) -ge 0 ]; do

COMPREPLY[$i]=${COMPREPLY[$i]#"$colonprefixes"}

done

return 0

} &&

complete -F _terraform terraform

EOF

See also

- Ansible

- Pulumi

- tfenv

- tfline

- terrafirma

- tfsec

- terrascan (no TF 0.13 support at this time)

- checkov

- conftest

- terratest

- pre-terraform-commit — Collection of git hooks for Terraform to be used with pre-commit framework

- infracost — Show the cloud cost of each infrastructure change in CI/CD

External links

- Official website

- Amazon EC2 AMI Locator — find the AWS AMIs for Ubuntu images

- Cloud/AWS CentOS — find the AWS AMIs for CentOS images